Dropbox support experience

Dropbox is a well-known global SaaS product, but it suffers from a subpar support experience.

Why is this?:

❌ Consistently low customer satisfaction (CSAT) scores

❌ Unsavory UX and off brand

❌ Does not match industry standards

❌ Siloing of support properties and their respective teams

❌ No ownership, framework, or roadmap

❌ Historically low design resourcing within the Dropbox CX org

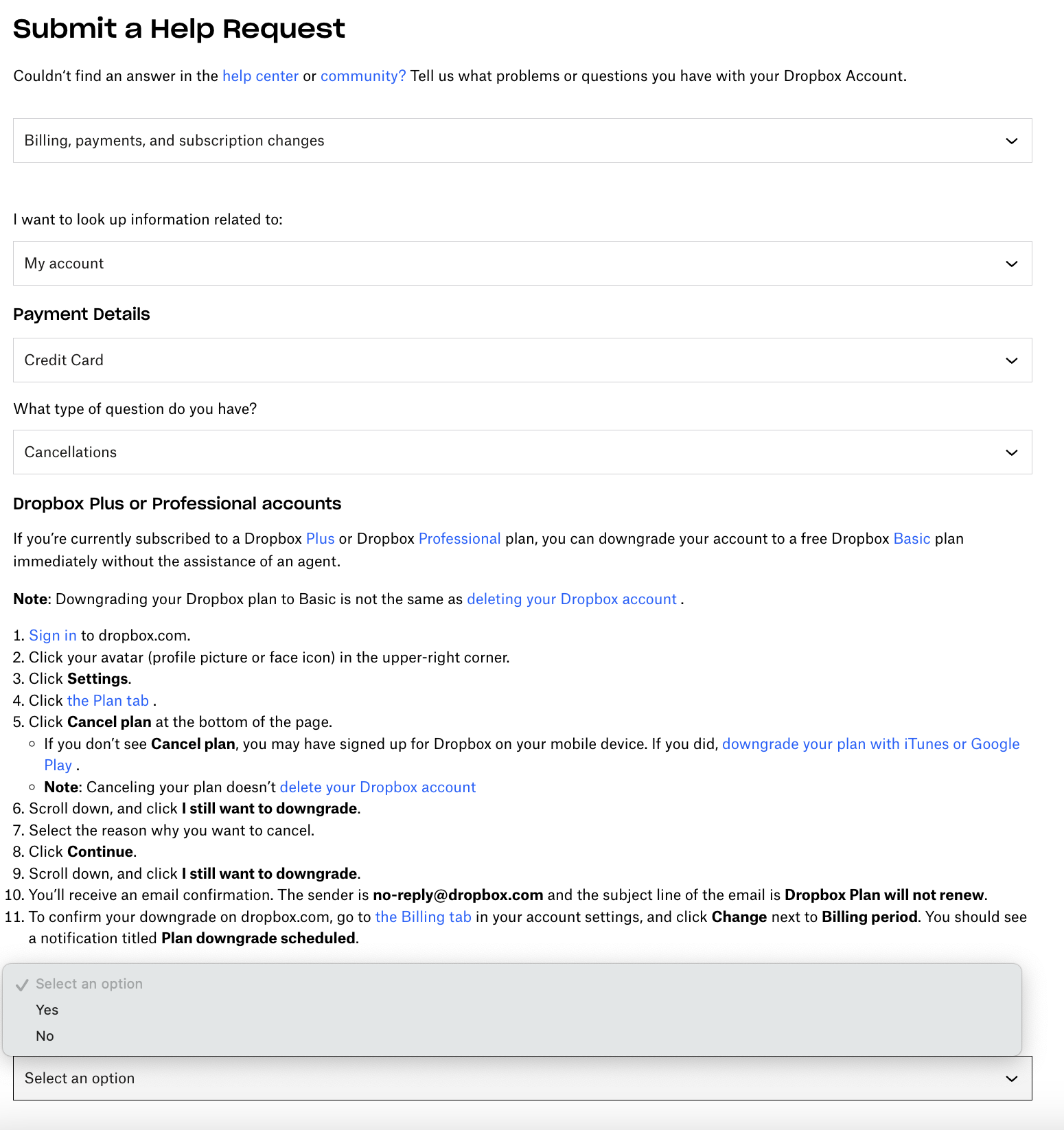

One section of the one of the scary email support forms 😱

Some of these problems carried significant implications. Here's why:

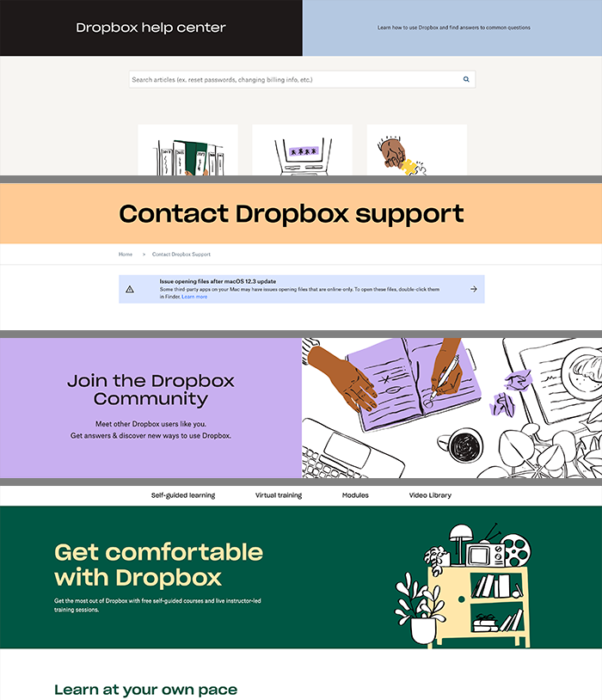

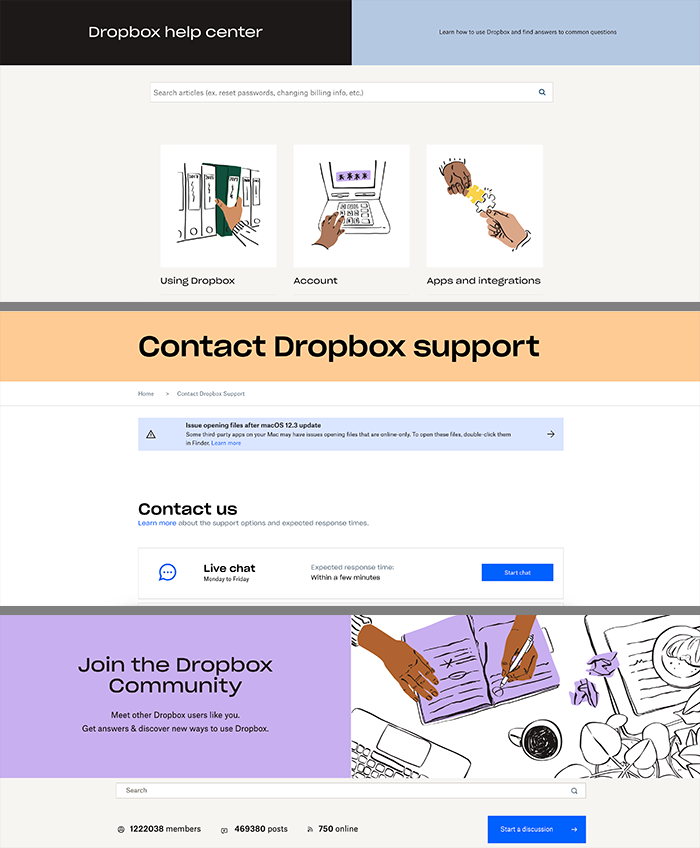

Siloing of support properties meant that each of Dropbox's 4 support surfaces (help center, support, Community, and Learn) lived on its own unique CMS*. This constrained our ability to make help content portable and reusable — for example displaying help center articles or snippets within the new support experience.

Siloing was also mirrored within the CX organization. Respective teams usually kept to themselves, developing their own roadmaps and systems in a vacuum. This affected broad organizational buy-in and visibility.

Additionally, the support site was built ad-hoc over the years as the product grew up, without a plan or framework, and passed around from team to team without clear ownership. This created loads of tech debt.

*Dropbox Learn used a headless CMS (Contentful), which made content reusable, but migrating everything to Contentful was way out of scope.

I entered the project as a content strategist and content designer, and soon became design team lead after helping to hire and oversee a UX designer and UX researcher.

Our design team represented a huge leap forward in the CX org, which had historically suffered from design atrophy due to under-resourcing of design talent and lack of design thinking.

The rest of the team were cross functional partners including a project manager, engineers, and program managers.

As a team, our allocation was limited, but gradually increased as we made small wins, gained momentum, and caught the attention of senior staff.

Our task as design team was to articulate customer expectations, identify the most critical UX breakpoints, navigate senior leadership, create a roadmap, launch an mvp...then iterate and repeat. Here's what happened:

I set up sessions with customers to walk through our support site and learned 2 key insights about user expectations in a support experience:

1) How can this immediately solve my problem?

Nobody visits support sites for fun — it's a matter of getting in and getting out with a resolution, as quickly as possible.

2) Can I trust this support site?

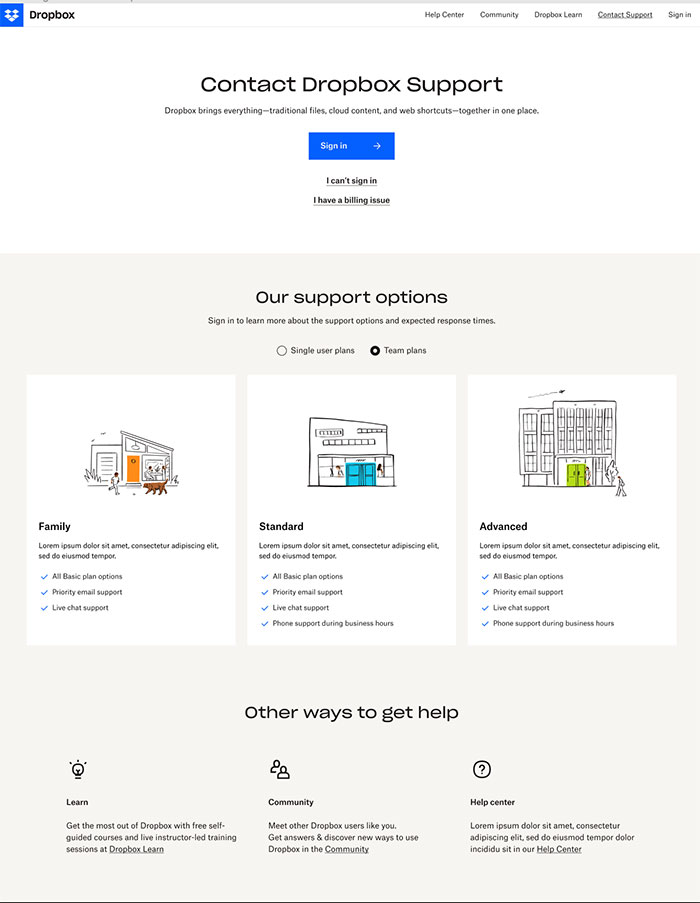

Customers quickly scan a support page for trust signals, namely a clear display of support entitlements (i.e., email, phone, live chat, etc.), and a way to contact a real person if they need to.

Data point: customers who have a support site's trust are more likely utilize self-service content (i.e., read help articles) before resorting to 1:1 support, like a phone call or live chat.

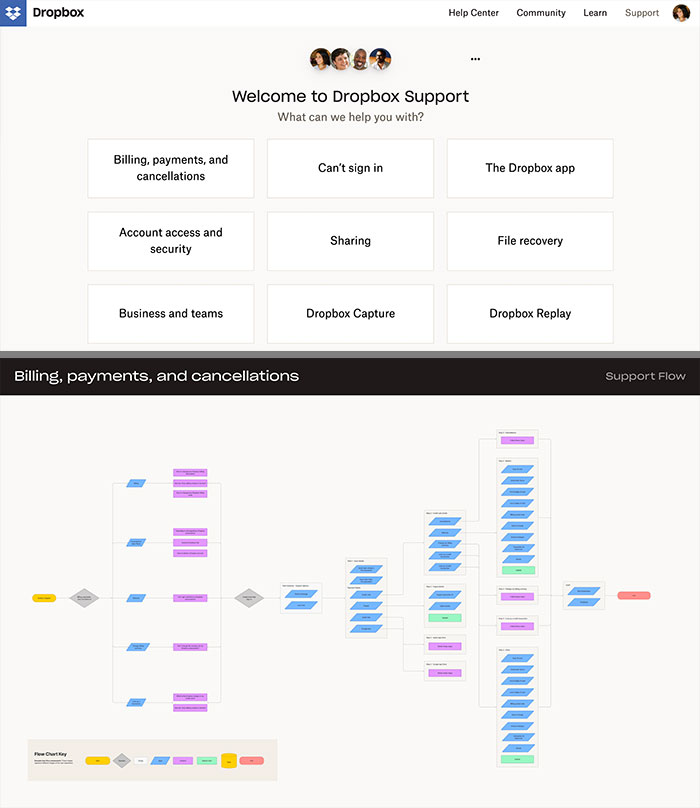

We knew the UX of support was "broken," but we needed to create a full mapping of what was broken, and why, so we could prioritize what to fix.

We created a heuristic test and ran the test with fellow designers from the Dropbox core product team. Some of the strongest feedback included:

😑 Lack of consistency and standards: UI did not match the rest of the product or marketing, i.e., inconsistent copy and design elements that did not follow the Dropbox design system

😑 Flexibility and efficiency of use: the most glaring example was the email support form for billing issues, which directed a customer through a long series of conditional form logic, embedded help content (which was out of date) and asking for too much input

😑 Visibility of system status: users entering a support flow weren't made aware of their support entitlements (i.e., looking for a live chat link when their SKU doesn't include live chat), or the when they could expect a response form support after submitting a ticket

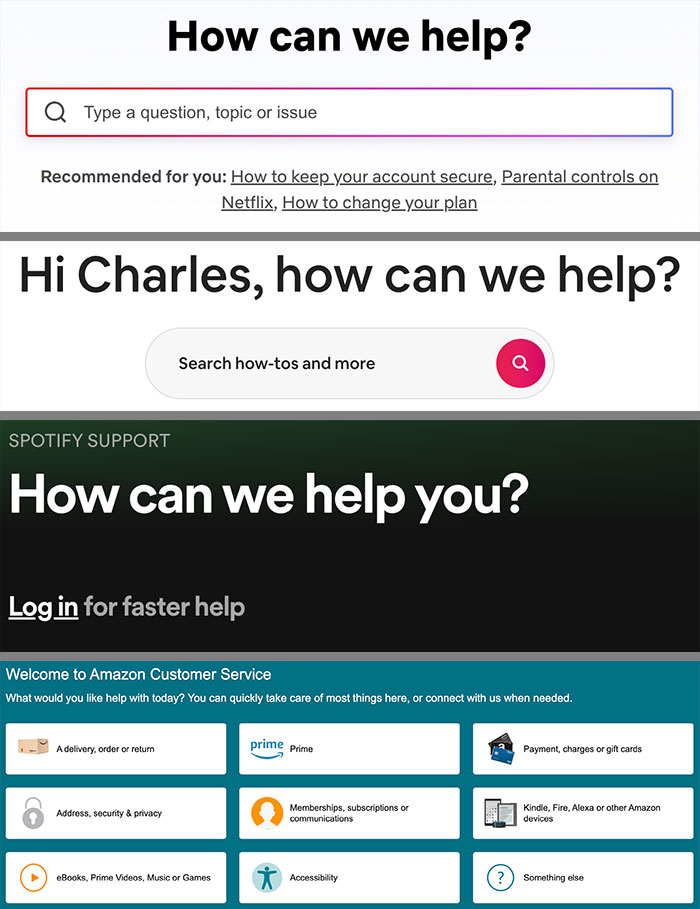

We also spent time auditing the UX flows of support of giant SaaS unicorns, including Amazon, Airbnb, Facebook, Netflix, Spotify, and others. From this we observed some patterns:

💡 Forcing user login to personalize the support experience, run analytics, and create a unique support profile

💡 Presenting a card-based design pattern to have customers narrow down their issue type

💡 Gating 1:1 support (like live chat, email, and phone) with self-serve content (help articles)

💡 Conversational and human-centered tone of UX copy, refers to support as "help": Tell us what you need help with.

💡 Help articles and other support entitlements are seamlessly integrated into a single support channel rather than separate ones like Dropbox

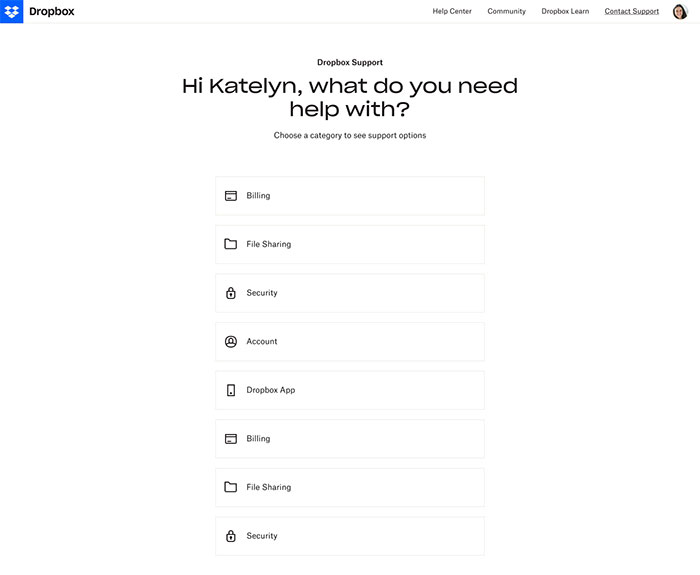

The team agreed on the mvp: a simplified card-based UX pattern where users narrow down their issue type by category and subcategory, then are shown a relevant help article.

The mvp was a scrappy proof of concept to convince leadership of the value of our work so we could keep growing. We had our fair share of constraints and doing things that could not scale:

• To trim scope, we didn't require users to log in. Instead, the mvp would only display to customers already logged in who were on the Basic SKU (aka free plan).

• We had no content recommendation engine, so I collaborated with the help center content team to manually select the top performing help center articles that were relevant to Basic SKU, then create the IA for the cards framework. The dev team hard-coded the article listings into the mvp.

• Our success metric was basic: clicks on the help center article, which signaled that users had found value in the cards and the article headline.

The mvp was a modest but pivotal success. Customers were clicking on articles in the mvp, while at the same time Dropbox renewed its focus and investment into the CX org, which included our team and project.

As design lead and content strategist, I helped chart the support product roadmap while increasing focus on the content recommendation engine:

Phase 2: determine the most salient analytics and heuristics to start weighting articles

Phase 3: surface relevant articles dynamically based on analytics model, use machine learning to improve the model

Phase 4+: personalized, AI-driven support platform

Each phase holds an incredible amount of technical and organizational complexity, far beyond the capacity of any one team or decision maker.

I was lucky enough to get a start on Phase 2...

To help me conceptualize the Phase 2 architecture, I leaned on ChatGPT with a detailed prompt (summarized here, output not shown):

Act like a content strategist with a high degree of technical architecture and content analytics knowledge, but also extensive editorial experience. You work at Dropbox on the customer experience (CX) team that is re-engineering the support experience. You have been tasked with creating a help center article recommendation engine for the customer support section of Dropbox.

The experience will start with customers in a UX flow selecting a category that matches their issue, and then narrowing down that by selecting a subcategory. The job has two parts:

1) Determine the optimal configuration of analytics (queries) in order for the system to recommend the most accurate and relevant article related to the customer’s issue, based on the set of heuristics below. This model will help us understand the data we’re measuring, as well as the value of the content, and the fidelity of the UX flow.

2) Generate more queries that measures improvements of the first set of queries in step 1.

Here are the analytics we have access to. Each article can offer these metrics: helpfulness score; CSAT (customer satisfaction); page views; traffic; SKU*; top trafficked article by organic search traffic; number of help center views per session; number of different surfaces by session (help center, community, /support); number of jumps between surfaces from one to another; group by previous URL and the 3 referrer fields in hc, /support, /get-help; group by issue category.

There are a few other important things to keep in mind:

• The business goal of this project is to reduce the number of inbound support tickets by offering customers self-serve content in the form of help center articles at this stage of the support journey. Therefore, we need to make sure articles surfaced can actually help customers with issues.

• “Best article” means: most relevant and most accurate

• *SKU: Customers who are in this support experience will be required to log in so that our system knows which SKU they are on. Some help center articles only pertain to certain SKUs, so we need to also make sure not to show articles that are not relevant to one’s SKU.

We want to build a data model using these heuristics that can eventually be fitted into an AI model that would dynamically populate articles based on each customer’s support profile.

My time at Dropbox ended as Phase 2 entered the later stages of design and prepping for handoff to the dev team.

I left with expertise of an entire product ecosystem (in this case, Dropbox support), with experience leading a design team, navigating organizational politics and senior leadership, and intense cross-functional collaboration.

The most interesting part of my work here was building an aspirational world-class structured framework for support content that was dependent on a multitude of complex inputs and system constraints.

It's worth noting that I also spent a great deal of time advocating for design principles within the CX org by cultivating allies with product and content designers across the company. I wouldn't normally mention this, but I feel it's important to understand that not all orgs within a large company see design equally, and any designer worth their salt should be prepared to articulate the business value of design across all levels.